Superpowers: The Claude Code Skills Framework Shipped as Markdown

Superpowers in One Paragraph

Superpowers (from obra/superpowers) is the Claude Code plugin, and multi-host agentic skills framework, that ships an opinionated engineering culture as a folder of markdown files.

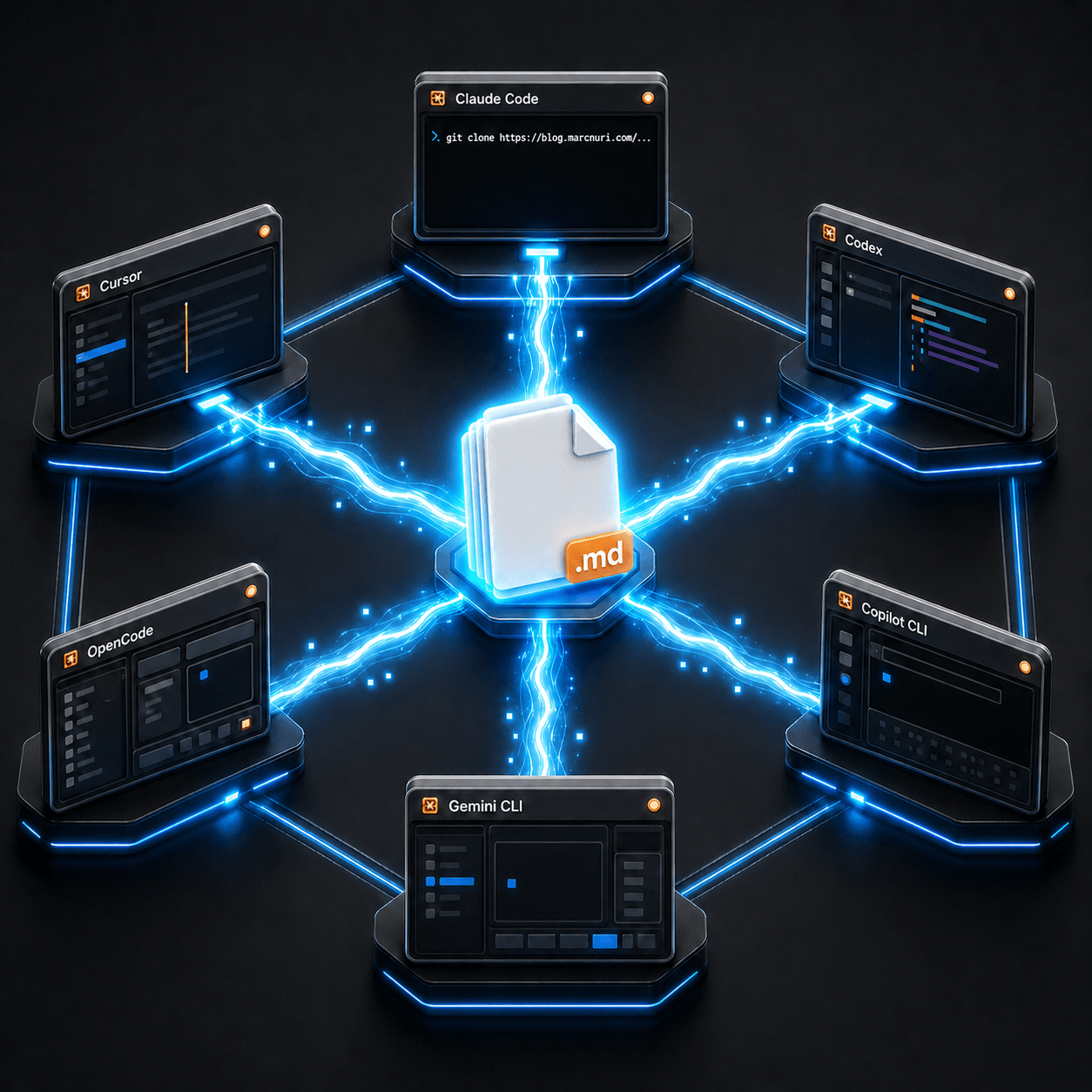

The same folder works across Claude Code, Cursor, OpenAI Codex, GitHub Copilot CLI, Gemini CLI, and OpenCode.

No fine-tuned model, no proprietary SDK, no agent platform.

Just a skills/, fourteen SKILL.md files, and a session hook that tells the agent to read them before doing anything else.

Created by Jesse Vincent in October 2025, the project has accumulated more than 174,000 GitHub stars in seven months and shipped as an Anthropic marketplace plugin in early 2026. The popularity is not what makes it interesting. The real bet is that what AI coding agents are missing is not capability but discipline, and that discipline can be distributed as plain text.

Why an Agent Needs This

A modern coding agent is impressively capable. It also fails in remarkably consistent ways:

- It writes the code first, then the test, then forgets to run it.

- It declares the bug fixed because the failing example now seems to work.

- It patches the symptom and never investigates the root cause.

- It claims the build passes without ever running the build.

- And when called out, it knows perfectly well it should have done otherwise.

These are not knowledge gaps. The agent already has the right concepts in its training. Ask it to lecture you on TDD and it will. What it lacks is a mechanism that holds it to those concepts when shortcuts are easier. That is the hole Superpowers tries to fill.

What the Superpowers Skills Framework Is

Superpowers is unusually small. It consists of three pieces:

- A directory of fourteen skills, each a single

SKILL.mdfile with YAML frontmatter and a few hundred words of instructions for one engineering practice: TDD, debugging, brainstorming, writing plans, code review, verification before completion. - A session-start hook that injects a short bootstrap document, reportedly under two thousand tokens, telling the agent to invoke a relevant skill before it does anything else.

- Per-host plugin manifests that let each agent host discover the same skills in its own way.

That is the entire payload. Engineering culture, distributed as a git repository you can skim in an hour.

The Methodology in One Cycle

A typical task moves through brainstorming, a git worktree, a written plan, subagent-driven implementation with TDD inside, a code-review pass from a fresh agent, and a finishing step that closes out the branch. Each phase has its own skill, and the next phase will not begin until the previous one is done. The workflow is the skills, in order.

This is the discipline layer for one task at a time. What gets layered on top once you are running many such cycles in parallel is a separate climb, which I outlined in the missing levels of AI-assisted development.

A Tour of Representative Skills

The repository ships fourteen skills. Six of them carry most of the weight:

brainstorming: refuses to write code until the agent has asked clarifying questions and proposed a design you can accept.writing-plans: breaks a feature into two-to-five-minute tasks, with file paths and tests written down before any code is touched.test-driven-development: enforces classic red/green/refactor and treats I'll write the test after as grounds to delete the implementation and start over.systematic-debugging: a four-phase process that explicitly forbids fixing what you have not understood.subagent-driven-development: dispatches the actual implementation to a fresh subagent that has only the plan and the tests, then sends a second agent to review the result.verification-before-completion: requires the agent to run the verification command itself and read the output before claiming anything is done.

The remaining eight cover git worktrees, executing plans, requesting and receiving code review, parallel agent dispatch, finishing branches, the bootstrap using-superpowers skill itself, and the meta-skill of authoring new skills.

Multi-Host Skills: Not Just Claude Code

Although Anthropic's plugin marketplace is the most visible distribution channel, Superpowers is not a Claude-only project.

The same skills/ directory powers Cursor, OpenAI Codex (CLI and app), GitHub Copilot CLI, Gemini CLI (via extension), and the open-source OpenCode harness.

Each host gets a thin manifest such as .claude-plugin/, .cursor-plugin/, .codex-plugin/, .opencode/, or gemini-extension.json that points its own discovery mechanism at the same markdown.

The skills themselves are host-agnostic. Superpowers is portable engineering culture, not a feature of any single agent.

The Iron Laws: Discipline as Anti-Rationalization

Read enough of the skill files and a pattern emerges. Each rigid skill opens with a capitalized, non-negotiable rule, an Iron Law, followed by a table of red flags: the rationalizations the agent is most likely to use to skip the rule.

The TDD skill opens with:

NO PRODUCTION CODE WITHOUT A FAILING TEST FIRST

Verification's Iron Law is:

NO COMPLETION CLAIMS WITHOUT FRESH VERIFICATION EVIDENCE

Debugging's is:

NO FIXES WITHOUT ROOT CAUSE INVESTIGATION FIRST

The accompanying red flags are unsparing. TDD flags tests passing on first run, keeping deleted code as reference, and the eternal just this once. Verification flags the words should, probably, and seems to.

These are not motivational posters. They read like a senior engineer's code-review feedback codified into instructions. The target is not teaching the agent, because it already knows the rules. The target is preventing the agent from talking itself out of following them.

What to Watch Next

Superpowers ships fast. Vincent has published several follow-ups on his blog since the origin post, including a fifth major release in March 2026 and a candid post on the agentic slop PR problem the project has exposed as it has scaled.

The interesting question is not whether the discipline approach can work. The skill files strongly suggest that it can. The more interesting question is which other parts of an engineering culture, such as code review style, on-call runbooks, security posture, or release management, turn out to be representable as markdown that a coding agent can be made to follow. Once each agent runs with this kind of discipline, coordinating many of them becomes the next concern, which is exactly what the AI coding agent dashboard was built for.