Isotope Mail: How to deploy Isotope+Traefik into Kubernetes

Introduction

Isotope mail client is a free open source webmail application and one of the side projects in which I invested my spare time during the last year. You can read more about Isotope’s features in a previous blog post.

Although there is still no official release, the application is quite stable and usable. In this post, I will show you how to deploy the application to a Kubernetes cluster. For the purpose of the tutorial I’ve used minikube + kubectl, but the same steps should be reproducible in a real K8s cluster.

Traefik v1

Despite it’s not part of the implementation, Traefik (or any other alternative) is one of the main pieces of the deployment as it will act as the API gateway (reverse-proxy) and route the requests to the appropriate Isotope component/service.

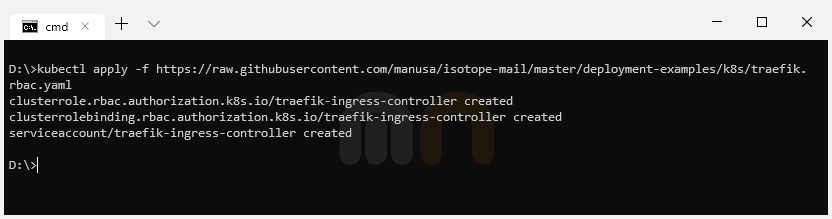

The first step is to create an Ingress controller for Traefik (if there isn’t any in the cluster yet). We will follow Traefik’s official documentation to create the new Ingress controller.

Role Based Access Control configuration (Kubernetes 1.6+ only)

Starting from 1.6 Kubernetes has introduced Role Based Access Control (RBAC) to allow more granular access control to resources based on the roles of individual users.

In order to allow Traefik to access global Kubernetes API, it’s necessary to create a ClusterRole and a ClusterRoleBinding.

1---

2kind: ClusterRole

3apiVersion: rbac.authorization.k8s.io/v1beta1

4metadata:

5 name: traefik-ingress-controller

6rules:

7 - apiGroups:

8 - ""

9 resources:

10 - services

11 - endpoints

12 - secrets

13 verbs:

14 - get

15 - list

16 - watch

17 - apiGroups:

18 - extensions

19 resources:

20 - ingresses

21 verbs:

22 - get

23 - list

24 - watch

25 - apiGroups:

26 - extensions

27 resources:

28 - ingresses/status

29 verbs:

30 - update

31---

32kind: ClusterRoleBinding

33apiVersion: rbac.authorization.k8s.io/v1beta1

34metadata:

35 name: traefik-ingress-controller

36roleRef:

37 apiGroup: rbac.authorization.k8s.io

38 kind: ClusterRole

39 name: traefik-ingress-controller

40subjects:

41 - kind: ServiceAccount

42 name: traefik-ingress-controller

43 namespace: kube-system

44---

45apiVersion: v1

46kind: ServiceAccount

47metadata:

48 name: traefik-ingress-controller

49 namespace: kube-system1kubectl apply -f https://raw.githubusercontent.com/manusa/isotope-mail/master/deployment-examples/k8s/traefik.rbac.yaml

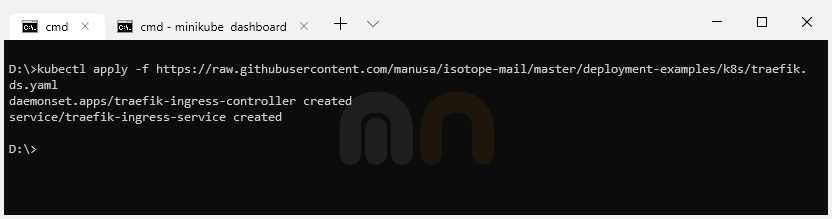

Deploy Traefik using a DaemonSet

The next step is to deploy Traefik ingress controller using a DaemonSet.

If you follow Traefik’s official documentation, you’ll see you can also achieve this using a Deployment. For the sake of this tutorial and as we are using Minikube, we will use a DaemonSet because it’s easier to configure and to expose with Minikube (Minikube has problems to assign external IPs to ingresses using Traefik ingress controller deployed using a Deployment).

1---

2kind: DaemonSet

3apiVersion: apps/v1

4metadata:

5 name: traefik-ingress-controller

6 namespace: kube-system

7 labels:

8 k8s-app: traefik-ingress-lb

9spec:

10 selector:

11 matchLabels:

12 k8s-app: traefik-ingress-lb

13 template:

14 metadata:

15 labels:

16 k8s-app: traefik-ingress-lb

17 name: traefik-ingress-lb

18 spec:

19 serviceAccountName: traefik-ingress-controller

20 terminationGracePeriodSeconds: 60

21 containers:

22 - image: traefik:v1.7

23 name: traefik-ingress-lb

24 ports:

25 - name: http

26 containerPort: 80

27 hostPort: 80

28 - name: admin

29 containerPort: 8080

30 hostPort: 8080

31 securityContext:

32 capabilities:

33 drop:

34 - ALL

35 add:

36 - NET_BIND_SERVICE

37 args:

38 - --api

39 - --kubernetes

40 - --logLevel=INFO

41---

42kind: Service

43apiVersion: v1

44metadata:

45 name: traefik-ingress-service

46 namespace: kube-system

47spec:

48 selector:

49 k8s-app: traefik-ingress-lb

50 ports:

51 - protocol: TCP

52 port: 80

53 name: web

54 - protocol: TCP

55 port: 8080

56 name: admin1kubectl apply -f https://raw.githubusercontent.com/manusa/isotope-mail/master/deployment-examples/k8s/traefik.ds.yaml

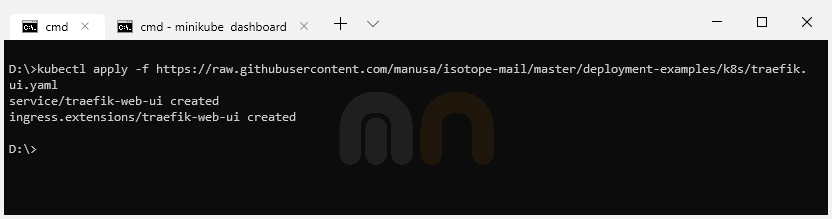

Traefik UI

We can optionally create a Service and Ingress for the Traefik web UI dashboard in order to monitor the new Traefik DaemonSet deployment.

1---

2apiVersion: v1

3kind: Service

4metadata:

5 name: traefik-web-ui

6 namespace: kube-system

7spec:

8 selector:

9 k8s-app: traefik-ingress-lb

10 ports:

11 - name: web

12 port: 80

13 targetPort: 8080

14---

15apiVersion: extensions/v1beta1

16kind: Ingress

17metadata:

18 name: traefik-web-ui

19 namespace: kube-system

20spec:

21 rules:

22 - host: traefik-ui.minikube

23 http:

24 paths:

25 - path: /

26 backend:

27 serviceName: traefik-web-ui

28 servicePort: web1kubectl apply -f https://raw.githubusercontent.com/manusa/isotope-mail/master/deployment-examples/k8s/traefik.ui.yaml

It’s important to note that for the Ingress configuration we are using traefik-ui.minikube as the public host. In a production environment, we would add a real hostname. An additional required step is to add the Kubernetes cluster IP (minikube ip / kubectl get ingress) into our local hosts file (/etc/hosts).

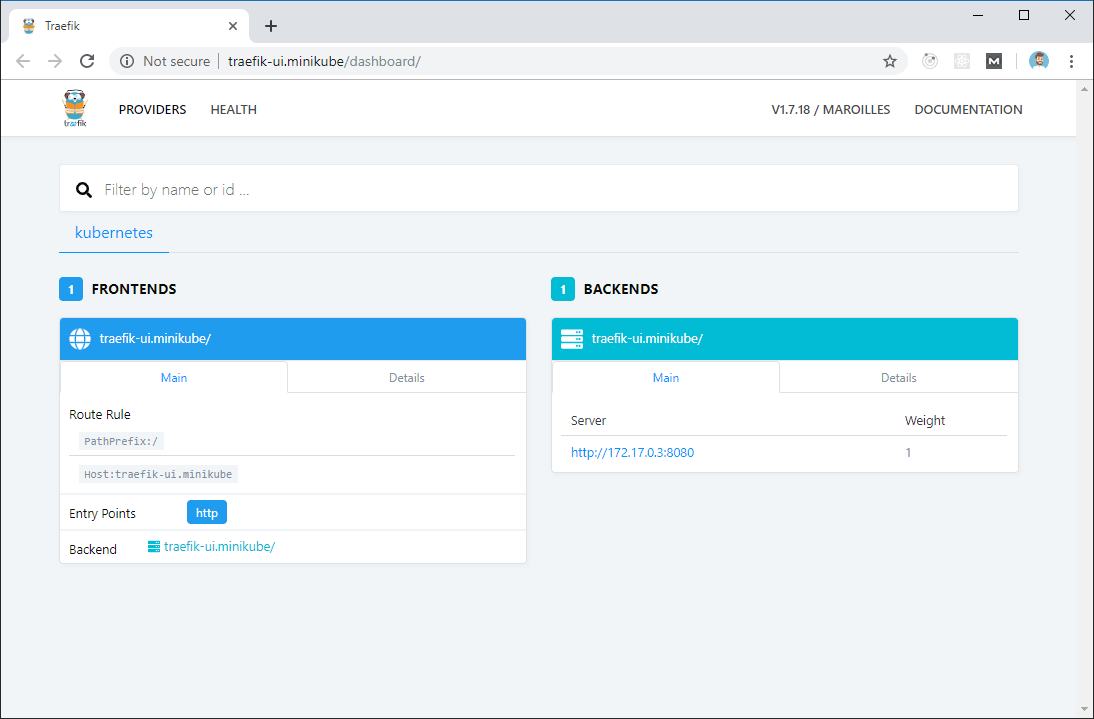

Finally, we can point our browser to traefik-ui.minikube to load Traefik’s dashboard.

Isotope

The last step is to deploy Isotope.

1---

2apiVersion: v1

3kind: Secret

4metadata:

5 name: isotope-secrets

6type: Opaque

7data:

8 encryptionPassword: U2VjcmV0SzhzUGFzd29yZA==

9---

10kind: Deployment

11apiVersion: apps/v1

12metadata:

13 name: isotope-server

14 labels:

15 app: isotope

16 component: server

17spec:

18 replicas: 1

19 selector:

20 matchLabels:

21 app: isotope

22 component: server

23 version: latest

24 template:

25 metadata:

26 labels:

27 app: isotope

28 component: server

29 version: latest

30 spec:

31 containers:

32 - name: isotope-server

33 image: marcnuri/isotope:server-latest

34 imagePullPolicy: Always

35 ports:

36 - containerPort: 9010

37 env:

38 - name: ENCRYPTION_PASSWORD

39 valueFrom:

40 secretKeyRef:

41 name: isotope-secrets

42 key: encryptionPassword

43 livenessProbe:

44 httpGet:

45 path: /actuator/health

46 port: 9010

47 failureThreshold: 6

48 periodSeconds: 5

49 # Use startupProbe instead if your k8s version supports it

50 initialDelaySeconds: 60

51 readinessProbe:

52 httpGet:

53 path: /actuator/health

54 port: 9010

55 failureThreshold: 2

56 periodSeconds: 5

57# startupProbe:

58# httpGet:

59# path: /actuator/health

60# port: 9010

61# initialDelaySeconds: 20

62# failureThreshold: 15

63# periodSeconds: 10

64---

65kind: Deployment

66apiVersion: apps/v1

67metadata:

68 name: isotope-client

69 labels:

70 app: isotope

71 component: client

72spec:

73 replicas: 1

74 selector:

75 matchLabels:

76 app: isotope

77 component: client

78 version: latest

79 template:

80 metadata:

81 labels:

82 app: isotope

83 component: client

84 version: latest

85 spec:

86 containers:

87 - name: isotope-client

88 image: marcnuri/isotope:client-latest

89 imagePullPolicy: Always

90 ports:

91 - containerPort: 80

92 livenessProbe:

93 httpGet:

94 path: /favicon.ico

95 port: 80

96 failureThreshold: 6

97 periodSeconds: 5

98---

99apiVersion: v1

100kind: Service

101metadata:

102 name: isotope-server

103spec:

104 ports:

105 - name: http

106 targetPort: 9010

107 port: 80

108 selector:

109 app: isotope

110 component: server

111---

112apiVersion: v1

113kind: Service

114metadata:

115 name: isotope-client

116spec:

117 ports:

118 - name: http

119 targetPort: 80

120 port: 80

121 selector:

122 app: isotope

123 component: client

124---

125apiVersion: extensions/v1beta1

126kind: Ingress

127metadata:

128 name: isotope

129 annotations:

130 kubernetes.io/ingress.class: traefik

131 traefik.frontend.rule.type: PathPrefixStrip

132spec:

133 rules:

134 - host: isotope.minikube

135 http:

136 paths:

137 - path: /

138 backend:

139 serviceName: isotope-client

140 servicePort: http

141 - path: /api

142 backend:

143 serviceName: isotope-server

144 servicePort: http1kubectl apply -f https://raw.githubusercontent.com/manusa/isotope-mail/master/deployment-examples/k8s/isotope.yaml

Secret

The first entry in the Yaml configuration is a Kubernetes Base64 encoded secret that will be used to set the encryption symmetric key in Isotope Server component.

Isotope Server Deployment

The next entry is the Deployment configuration for the Isotope Server component. As this deployment is more complex than the Client Deployment, basic common configurations for both components will be described in the next section (Isotope Client Deployment).

In the env section, we’re declaring the ENCRYPTION_PASSWORD environment variable and assigning it the value of the Secret we declared in the previous step. This variable will be available to all Pods created by Kubernetes from this Deployment configuration, thus, all Pods will share the same encryption key and will be compatible.

We’re also defining two different probes so that Traefik and Kubernetes know when the Isotope Server Pods are ready and traffic can be routed to them. Liveness probe will be used to determine if the container is still alive. Otherwise, Kubernetes will restart the Pod as the application state is considered to be broken. We’re also using the initialDelaySeconds because the application takes a couple of seconds to spin up and this way we’ll avoid false positives for the probe. If your Kubernetes version supports it, it’s better to define a startup probe instead of this initialDelaySeconds.

A readiness probe is also defined in this section. This probe is similar to the liveness probe and will be used to indicate if the application is ready to receive traffic. If for some reason the application is temporarily not admitting traffic (max number of connections, etc.) the probe will set the application “down” but Kubernetes will not restart it.

For both probes, we’re using an HTTP request pointing to Spring Actuator’s health check endpoint which is available in Isotope Server component.

Isotope Client Deployment

Same as we did for the Server component, we’re now defining a Deployment for Isotope’s Client component.

In the spec section we’re defining the number of replicas we want for our Pods, in this case, one. The selector property, although optional in previous API versions, is now mandatory and will be used by Kuberentes to determine the number of Pods actually running to spin up more replicas if necessary (they should match the labels in the template section).

The template entry within the spec section is used to define the Pod specifications. For Isotope Client we’re defining a Pod with a single container based on marcnuri/isotope:client-latest Docker image exposing Http port.

As with the Isotope Server deployment, we define a simple liveness probe in case the Pod becomes unstable and reaches a broken state so that Kubernetes will automatically restart it.

Services

The next section in the configuration defines a Service for each of the previous deployments (server/client) in order to expose them to the cluster.

To ease the Ingress definition in further steps, both Services will expose Http port (80).

Ingress

The final section of the configuration defines an Ingress using the Ingress controller deployed in the first steps of the tutorial.

We are going to use isotope.minikube as the public host, although in a production environment we should use a valid and real hostname. We will also need to add an additional entry in our /etc/hosts file.

Traffic reaching http://isotope.minikube/api will be routed by Traefik to isotope-server service, traffic reaching http://isotope.minikube/ will be routed to isotope-client service.

The use of traefik.frontend.rule.type: PathPrefixStrip configuration will remove /api from the requests to isotope-server service, this way, no additional modifications or configurations will be necessary for Isotope Server component to be compatible with our deployment.

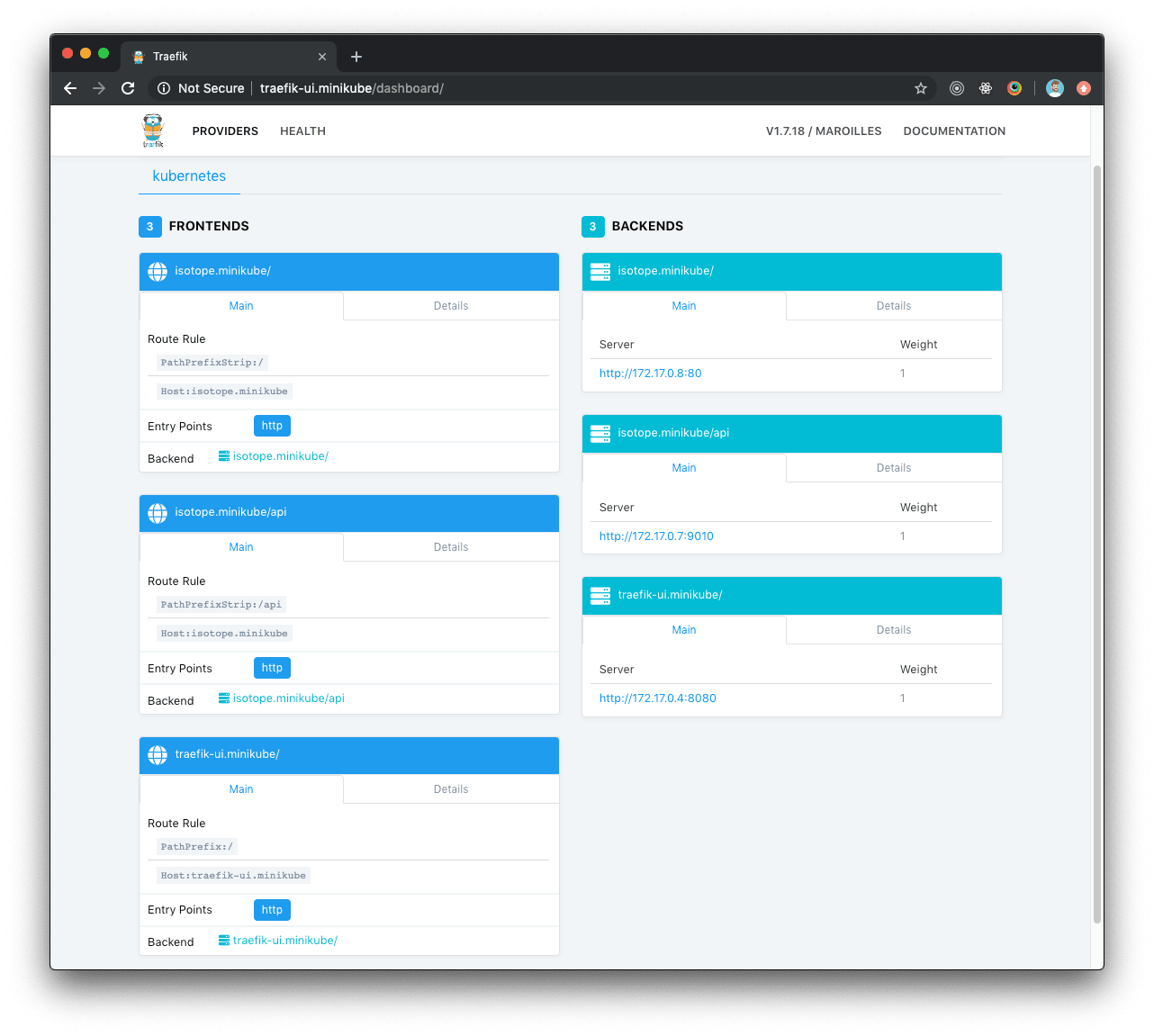

Traefik dashboard with Isotope

Once Isotope configuration is deployed, Traefik dashboard will automatically update and display the new routes for Isotope.

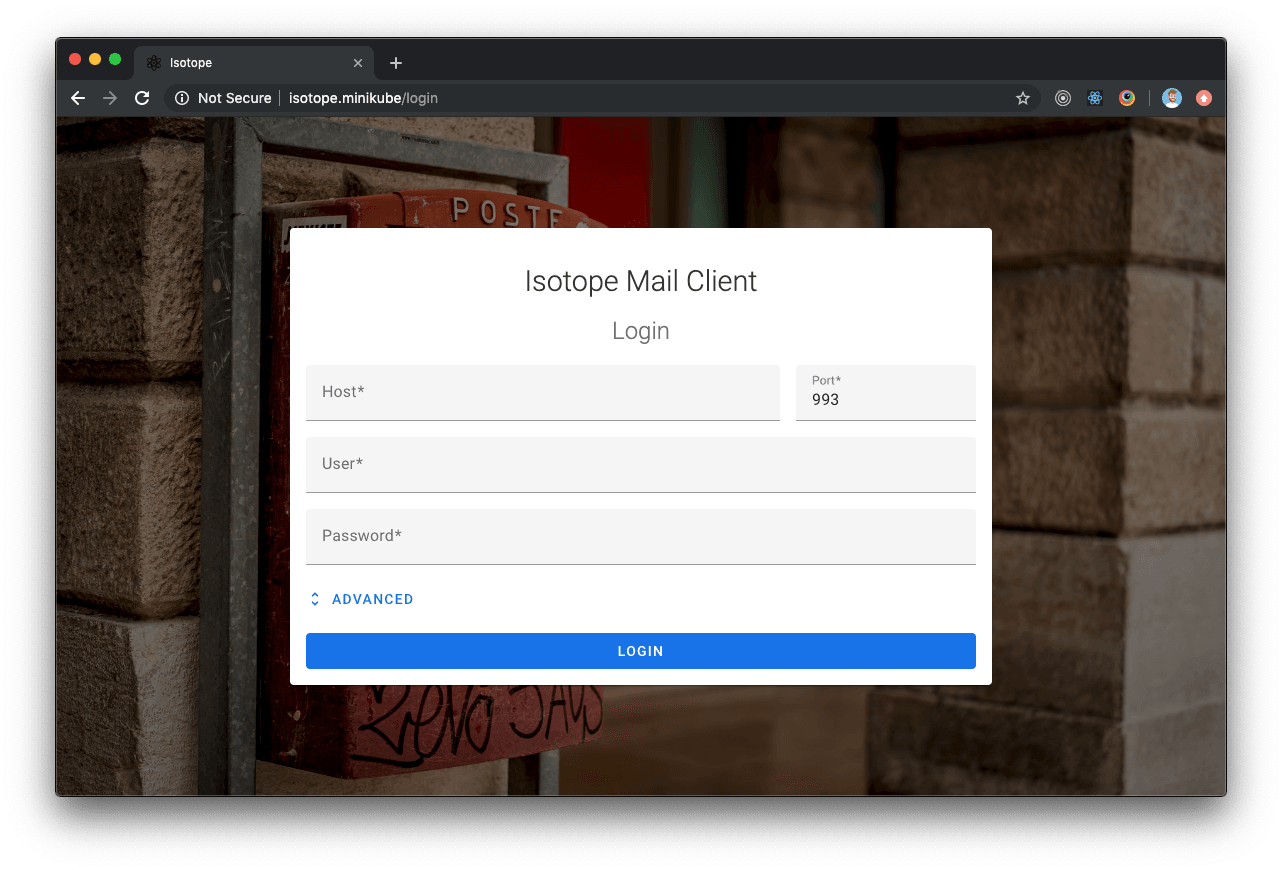

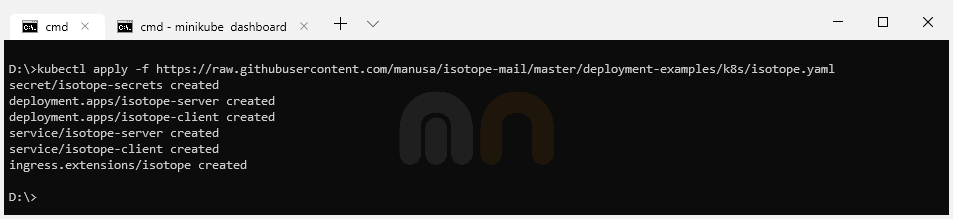

Isotope deployment

If everything went OK and Traefik dashboards display healthy Isotope components, we can now point our browser to http://isotope.minikube where Isotope will be ready and accessible.